Ah, Google. An interesting a somewhat shocking review of this article makes one (me), think... hmmm. Thinking about our discussions about neural networks, the 'Google Brain' with about 1 million simulated neurons and simulated connections (today) this deep learning is quite fascinating and through a revival, has gone on to make deep-learning systems "ten times larger again." Deep Learning is defined as "massive amounts of data and processing power helps computers crack messy problems that humans solve almost intuitively, from recognizing faces to understanding language." Experts used the positive shift as an increase in applying deep learning to natural-language understanding - comprehending human discourse. In the 80's, the idea of deep learning was used to understand facial recognition. These systems tried to make their own rules (from scratch) and applied the mechanics to achieve brain-like functionality. In the 2000's speech recognition was explored and today, we see some of this research through applications specifically with Google and it's android smartphone systems and now with Siri the iPhone's voice-activated digital assistant - both using deep learning. There is another shift, deep learning has been useful in scientific tasks - patterns in data sets and predicting drug interactions, understanding the interactions between body systems, but as a current researcher, Oren Etzioni, points out that he will not use the brain for inspiration. His goal is to invent a computer that will be able to pass a standardized test - to be able to read and understand diagrams and text - a computer that can actually reason between on the facts provided.

Note: The video adds a bit of interesting perspective on this topic.

Note: The video adds a bit of interesting perspective on this topic.

Forget the Turing Test: Here's how we could actually measure AI |

A look at alternative measures including language comprehension, this article suggests problems with the Turing test and how, when and why there is more to intelligence. For example, as the article points out, humans interact through sights, sounds and even recognition of objects and people. Computers, specifically bots can decipher text, but for a computer to recognize a a mother's face, the feeling when a soccer kicker scores a goal or even placing emphasis on certain nouns or verbs in a sentence and make meaning from the text. The computer can interpret the text and then reason about it, but is that enough? For the soccer analogy, a learning machine could provide facts and details about soccer, but is that enough? The article points out a shift - a different kind of measure: instead of capabilities and flexibilities of the system, it might be that we don't need to match the capabilities of a human, but focus on a quest to "organize the world's information."

|

A Computer just passed the Turning Test in Landmark Trial

“The new form of the problem can be described in terms of a game which we call the ‘imitation game.'”

What he meant was: Can a computer trick a human into thinking it’s actually a fellow human? That question gave birth to the “Turing Test” 65 years ago. Supposedly (and I use this very loosely) the computer passed the test. To pass the test, 33 percent of human questioners convinced the interrogators into believing that it is not a machine but rather is a human and this case a 13-year-old boy named Eugene from the Ukraine. The article points about how the test might have been skewed because of the language, but I think what is more important and really something that scares me, is the implications of our trust and how this type of research effects what we know about online communication. How many times have we heard of a person taking ont he persona of a 'child' to dupe a unsuspecting child into believing whatever they are saying and then getting into trouble by those thoughts. It is foolery at a level that we can't turn a blind eye to -- what the article points out "fooled into believing something is true... when in fact it is not."

What he meant was: Can a computer trick a human into thinking it’s actually a fellow human? That question gave birth to the “Turing Test” 65 years ago. Supposedly (and I use this very loosely) the computer passed the test. To pass the test, 33 percent of human questioners convinced the interrogators into believing that it is not a machine but rather is a human and this case a 13-year-old boy named Eugene from the Ukraine. The article points about how the test might have been skewed because of the language, but I think what is more important and really something that scares me, is the implications of our trust and how this type of research effects what we know about online communication. How many times have we heard of a person taking ont he persona of a 'child' to dupe a unsuspecting child into believing whatever they are saying and then getting into trouble by those thoughts. It is foolery at a level that we can't turn a blind eye to -- what the article points out "fooled into believing something is true... when in fact it is not."

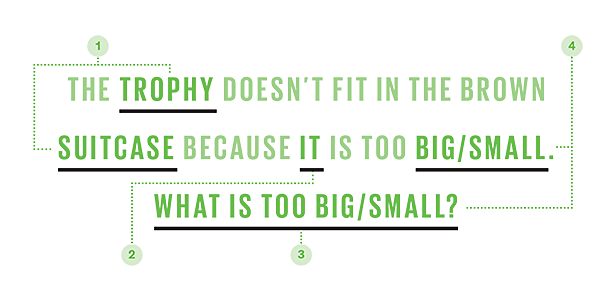

A publicity stunt that actually generated much interest from the AI community. This article provides a cool look at the anatomy of an AI Test - specifically the Winograd schemas which might be a better test of human-level artificial intelligence because of the reasoning.

A Pedagogical Agent as an Interface of an Intelligent Tutoring System to Assist Collaborative Learning

Intelligent Tutoring Systems |

This article adds to the myriad of articles about Intelligent Tutoring Systems (ITS). I added this to my repository because of its compact and easily digestible information. My concern with ITS is the idea that we don't need an educator or curriculum to help shape a students learning and we turn to these systems to replace 'learning'. We place so much emphasis on high stakes testing, these systems could easily me misinterpreted as adding so much value that we start replace really good teaching practices with an ITS. I agree that an ITS could be an excellent supplement, but I would not want to enter a school building and see classrooms filled with computer stations (think cubicles) and students with headphones on as they 'interact' with these systems for their entire time as a student. I believe that conversations, connections and collaboration (think emotional connections to the experiences) are still important to student learning. If we rely on a computer to 'think' with us, what happens when a student is presented a problem in the real world. Who will 'think' for the student and guide his thoughts through the process. Although, I believe that ITS do have a place, they should be what I believe is their intent -- a tutor, not a coach, not the teacher.

|

The Three Breakthroughs that have finally unleashed the artificial world - What may lie ahead

The electronic genius as the article refers to, Watson, that was able to win and beat the contestants in Jeopardy seems like it was yesterday, but it happened in 2011... wow, artificial intelligence has definitely come a long way since that time! I had no idea how large the original Watson was - the size of a bedroom, with 10 upright, refrigerator-shaped machines forming four walls... now today's Watson exists beyond the wall of the cabinets - across a cloud of servers that run hundreds of "instances" as the article describes, of AI all at once and serving up simultaneous customers anywhere in the world. People can access Watson from their phones, computers or even data servers. The cool thing is, as people use Watson, it gets 'smarter' by anything it is learning. The information is transferred across software engines. So what are the 3 breakthroughs?

- 1) Cheap Parallel Computation - related to the neural networks - the primary architecture of AI software. Each node of the neural network loosely imitates a neuron in the brain and interacting with its neighbors to make sense of the information/signals it receives.

- 2)Big Data - "every intelligence has to be taught", even the human brain still needs examples to distinguish objects. Even the best AI computer has to play a 1,000 games of chess before it "get's good". The avalanche of data as the article points out is providing the 'schooling' for AI needs.

- 3) Better Algorithms - Digital neural nets were invented in the 1950's, but it took many years before scientists learned how to understand the relationships. The key was to organize everything. Deep learning is an example - logical thinking. Google search engine for example of the better algorithms.

- The interesting piece of this article is how and what all of this means - what are teh ramifications of developing bigger data, better algorithms and more cloud convergence? The article points out that we don't know when or how or even where the first AI catastrophe will occur, but it is decades off... SCARY because of the digital and highly interconnected world in which we live in.

My thoughts...

An extension to my thoughts and Language Development and why I think we have a long way to go for an artificially intelligent baby:

https://www.dropbox.com/s/adxbjy21luc7dsr/11162968_10152729882896965_150849984_n.mp4?dl=0

While researching for better understanding of my paper, Constructivism and Artificial Intelligence: Knowledge construction, Patterns of behaviors and BabyX, I found that there are specific behavior patterns which are influenced by a relationship between a stimulus (S) and a desired response (R) in a human versus artificially intelligent baby. In the case of 'Bowen', take for example the stimulus that is provided and her response. I think we have a long way to go until we total understand the types of influences which cause certain responses in an artificially intelligence 'baby'. Certainly, machines will continue to make breakthroughs and become more sophisticated, as evidenced by the deep learning machines article, but the unpredictability could lead us into some dangerous ground. Right now there are certain ideas which can be predicted based on historical trends, but as more and more data is consumed by machines, will we as a society begin to rely solely on the output of a machine? I believe that we as a society are somewhat unstable - for example, we still cannot predict how, when and even why tragic events such as the Earthquake in Nepal happen, a computer won't capture the emotional impact of the unrest. Artificial intelligence is capable of providing routine answers, but humanlike responses, contacts and even the presence of the interaction is still very far off in my opinion. It is both fascinating and scary all at the same time.

https://www.dropbox.com/s/adxbjy21luc7dsr/11162968_10152729882896965_150849984_n.mp4?dl=0

While researching for better understanding of my paper, Constructivism and Artificial Intelligence: Knowledge construction, Patterns of behaviors and BabyX, I found that there are specific behavior patterns which are influenced by a relationship between a stimulus (S) and a desired response (R) in a human versus artificially intelligent baby. In the case of 'Bowen', take for example the stimulus that is provided and her response. I think we have a long way to go until we total understand the types of influences which cause certain responses in an artificially intelligence 'baby'. Certainly, machines will continue to make breakthroughs and become more sophisticated, as evidenced by the deep learning machines article, but the unpredictability could lead us into some dangerous ground. Right now there are certain ideas which can be predicted based on historical trends, but as more and more data is consumed by machines, will we as a society begin to rely solely on the output of a machine? I believe that we as a society are somewhat unstable - for example, we still cannot predict how, when and even why tragic events such as the Earthquake in Nepal happen, a computer won't capture the emotional impact of the unrest. Artificial intelligence is capable of providing routine answers, but humanlike responses, contacts and even the presence of the interaction is still very far off in my opinion. It is both fascinating and scary all at the same time.